Statistics,

explained in

plain language.

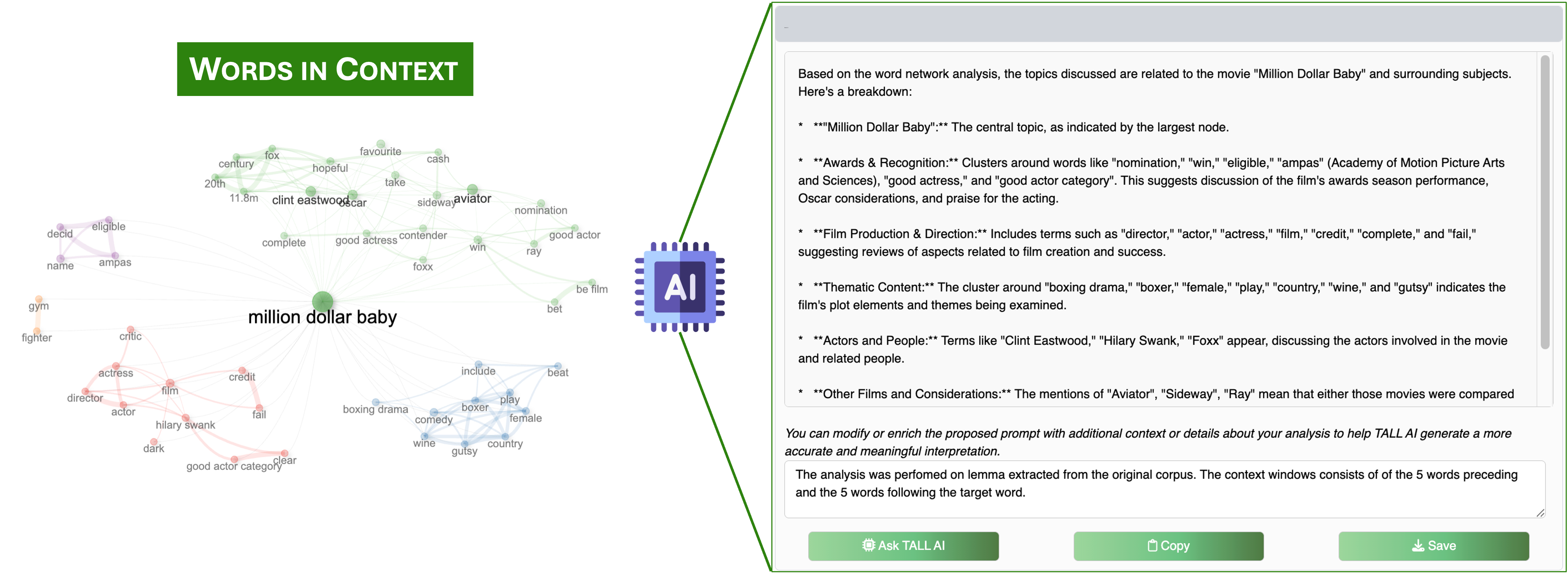

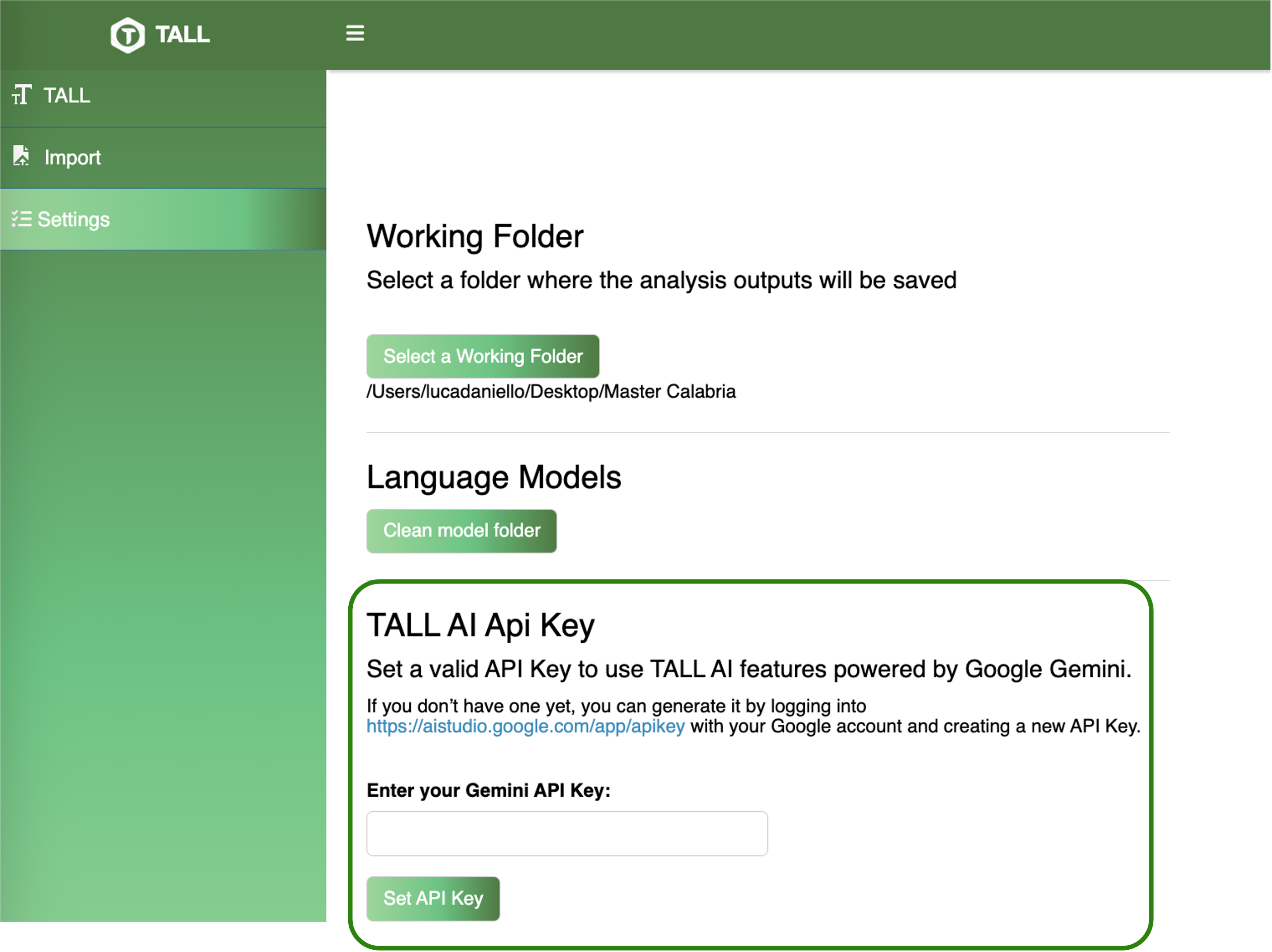

A context‑aware assistant — built on the Google Gemini API — that generates contextualised explanations for every analytical step in TALL. It interprets topic clusters, reads sentiment distributions, suggests next analyses, and comments on network communities in natural language, right alongside the numerical output.

Integrated with Google Gemini · Medium (16k tokens) or Large (32k tokens) modes